目录

目录Python全系列 教程

3567个小节阅读:5930.1k

鸿蒙应用开发

C语言快速入门

JAVA全系列 教程

面向对象的程序设计语言

Python全系列 教程

Python3.x版本,未来主流的版本

人工智能 教程

顺势而为,AI创新未来

大厂算法 教程

算法,程序员自我提升必经之路

C++ 教程

一门通用计算机编程语言

微服务 教程

目前业界流行的框架组合

web前端全系列 教程

通向WEB技术世界的钥匙

大数据全系列 教程

站在云端操控万千数据

AIGC全能工具班

A A

White Night

保存图片

ximport pickledef unpickle(file): with open(file, 'rb') as fo: dict = pickle.load(fo, encoding='bytes') return dict

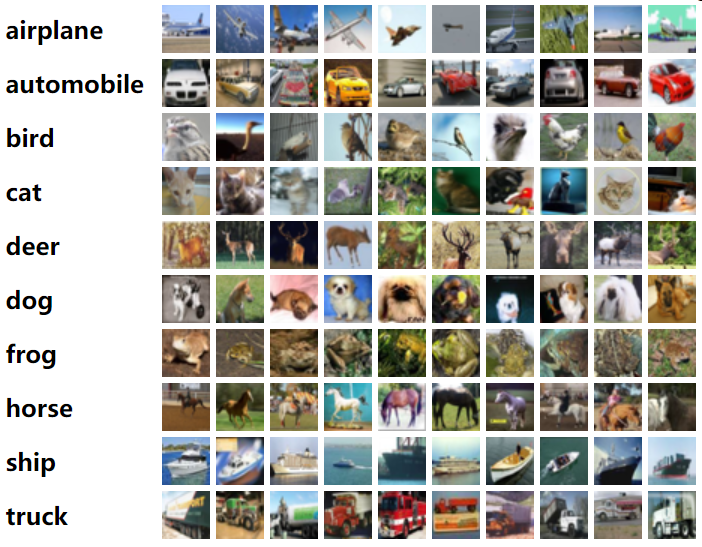

# 图片的标签名称(顺序已排好)label_name = ["airplane", "automobile", "bird", "cat", "deer", "dog", "frog", "horse", "ship", "truck"]

import globimport numpy as np# pip install opencv-python -i https://pypi.tuna.tsinghua.edu.cn/simple/import cv2 import os

def save_image(filenames,save_path): for l in filenames: l_dict = unpickle(l) # 每个l_dict包含了10000张图片 for im_idx,im_data in enumerate(l_dict[b'data']): im_label = l_dict[b'labels'][im_idx] # 标签(0-9) im_name = l_dict[b'filenames'][im_idx] # 图片名称 im_label_name = label_name[im_label] # 标签名称 im_data = np.reshape(im_data,[3,32,32]) # 整理图片形状 im_data = np.transpose(im_data,(1,2,0)) # opencv中对图片的处理是HWC

if not os.path.exists("{}\\{}".format(save_path,im_label_name)): os.mkdir("{}\\{}".format(save_path,im_label_name)) # 使用cv2存储图片 cv2.imwrite("{}\\{}\\{}".format(save_path,im_label_name, im_name.decode("utf-8")),im_data)

if __name__ == '__main__': # 获取训练集文件名 train_filenames = glob.glob("CIFAR10\\data_batch_*") train_save_path = "data\\TRAIN" save_image(train_filenames,train_save_path) print("训练数据集保存完毕!") test_filenames = glob.glob("CIFAR10\\test_batch*") test_save_path = "data\\TEST" save_image(test_filenames,test_save_path) print("测试数据集保存完毕!")编写数据集类(继承torch.utils.data.Dataset),加载数据

xxxxxxxxxximport enumfrom torchvision import transformsfrom torch.utils.data import DataLoader, Datasetfrom PIL import Imageimport numpy as npimport glob

# 标签名称label_name = ["airplane", "automobile", "bird", "cat", "deer", "dog", "frog", "horse", "ship", "truck"]

# 训练集数据预处理train_transform = transforms.Compose([ transforms.RandomHorizontalFlip(), # 随机水平翻转 transforms.RandomVerticalFlip(), # 随机垂直翻转 transforms.ToTensor(), # 将数据转换成张量Tensor对象 # 分别指定三个通道的均值和标准差,进行归一化数据 transforms.Normalize((0.49,0.48,0.44), (0.21,0.18,0.22))])

# 测试集数据预处理test_transform = transforms.Compose([ transforms.CenterCrop((32,32)), # 从中心位置裁剪指定大小的图像 transforms.ToTensor(), # 将数据转换成张量Tensor对象 # 分别指定三个通道的均值和标准差,进行归一化数据 transforms.Normalize((0.49,0.48,0.44), (0.21,0.18,0.22))])

label_dict = {}

for idx,name in enumerate(label_name): label_dict[name] = idx

def default_loader(path): return Image.open(path).convert("RGB")

# 自定义的Dataset是一个包装类,用来将数据包装为Dataset类,# 然后传入DataLoader中从而使DataLoader类更加快捷的对数据进行操作class MyDataset(Dataset): def __init__(self,im_list,transform=None,loader=default_loader): super(MyDataset,self).__init__() imgs = []

for im_item in im_list: # im_item形如:'data\\TRAIN\\airplane\\xxx.png' im_label_name = im_item.split("\\")[-2] # imgs存储的是图片路径和其对应的label imgs.append([im_item,label_dict[im_label_name]]) self.imgs = imgs self.transform = transform # 预处理 self.loader = loader # 加载函数

# index参数是一个索引,这个索引的取值范围要根据__len__这个方法的返回值确定 def __getitem__(self, index): im_path,im_label = self.imgs[index] im_data = self.loader(im_path) if self.transform is not None: im_data = self.transform(im_data) # 图像预处理 return im_data, im_label def __len__(self): return len(self.imgs)

# 读取数据集的所有文件名列表im_train_list = glob.glob("data\\TRAIN\\*\\*.png")im_test_list = glob.glob("data\\TEST\\*\\*.png")

train_dataset = MyDataset(im_train_list, transform=train_transform)test_dataset = MyDataset(im_test_list, transform=test_transform)

# 训练数据加载器train_loader = DataLoader(dataset=train_dataset, batch_size=128, shuffle=True)

# 测试数据加载器 test_loader = DataLoader(dataset=test_dataset, batch_size=128, shuffle=False)

print("训练集数据量:",len(train_dataset))print("测试集数据量:",len(test_dataset))编写神经网络类

xxxxxxxxxximport torch.nn as nnimport torch.nn.functional as F

class VGGbase(nn.Module): def __init__(self): super(VGGbase, self).__init__()

# 32x32 self.conv1 = nn.Sequential( # 输入通道数in_channels=3,输出通道数out_channels=64 # conv1卷积的效果保持了输入与输出的尺寸相同(都是32) nn.Conv2d(3, 64, kernel_size=3, stride=1, padding=1), # 对输入batch的每一个特征通道进行normalize,64表示输出的channel数量 nn.BatchNorm2d(64), nn.ReLU() ) # 16 x 16 self.max_pooling1 = nn.MaxPool2d(kernel_size=2, stride=2)

# 16 x 16 self.conv2_1 = nn.Sequential( nn.Conv2d(64, 128, kernel_size=3, stride=1, padding=1), nn.BatchNorm2d(128), nn.ReLU() )

# 16 x 16 self.conv2_2 = nn.Sequential( nn.Conv2d(128, 128, kernel_size=3, stride=1, padding=1), nn.BatchNorm2d(128), nn.ReLU() )

# 8 x 8 self.max_pooling2 = nn.MaxPool2d(kernel_size=2, stride=2)

# 8 x 8 self.conv3_1 = nn.Sequential( nn.Conv2d(128, 256, kernel_size=3, stride=1, padding=1), nn.BatchNorm2d(256), nn.ReLU() )

# 8 x 8 self.conv3_2 = nn.Sequential( nn.Conv2d(256, 256, kernel_size=3, stride=1, padding=1), nn.BatchNorm2d(256), nn.ReLU() )

# 4 x 4 self.max_pooling3 = nn.MaxPool2d(kernel_size=2, stride=2)

# 4 x 4 self.conv4_1 = nn.Sequential( nn.Conv2d(256, 512, kernel_size=3, stride=1, padding=1), nn.BatchNorm2d(512), nn.ReLU() )

# 4 x 4 self.conv4_2 = nn.Sequential( nn.Conv2d(512, 512, kernel_size=3, stride=1, padding=1), nn.BatchNorm2d(512), nn.ReLU() )

# 2 x 2 self.max_pooling4 = nn.MaxPool2d(kernel_size=2, stride=2)

# batchsize * 512 * 2 *2 --> batchsize * (512 * 4) self.fc = nn.Linear(512 * 4, 10)

def forward(self, x): batchsize = x.size(0) out = self.conv1(x) out = self.max_pooling1(out) out = self.conv2_1(out) out = self.conv2_2(out) out = self.max_pooling2(out)

# out = self.conv3_1(out) out = self.conv3_2(out) out = self.max_pooling3(out)

out = self.conv4_1(out) out = self.conv4_2(out) out = self.max_pooling4(out) # batchsize * c * h * w --> batchsize * n out = out.view(batchsize, -1)

out = self.fc(out) # dim=1是对行做归一化 out = F.log_softmax(out, dim=1)

return out

def VGGNet(): return VGGbase()训练

xxxxxxxxxximport torchimport torch.nn as nnfrom vggnet import VGGNetfrom load_cifar10 import train_loaderimport os

# 防止核崩溃的设置os.environ['KMP_DUPLICATE_LIB_OK'] = 'True'

epoch_num = 200 # 训练轮数lr = 0.01 # 学习率batch_size = 128 # 每批的训练样本数

net = VGGNet()

#lossloss_func = nn.CrossEntropyLoss()

#optimizeroptimizer = torch.optim.Adam(net.parameters(), lr= lr)

# optimizer = torch.optim.SGD(net.parameters(), lr = lr,# momentum=0.9, weight_decay=5e-4)

# step_size:每多少轮循环后更新一次学习率(lr)# gamma:每次更新lr的gamma倍scheduler = torch.optim.lr_scheduler.StepLR(optimizer, step_size=5, gamma=0.9)

step_n = 0for epoch in range(epoch_num): # 外层循环“轮” print(" epoch is ", epoch) net.train() # 设置模型为训练模型 for i, data in enumerate(train_loader): # 内层循环加载每一批数据 inputs, labels = data

outputs = net(inputs) # 前向传播 loss = loss_func(outputs, labels) optimizer.zero_grad() # 清空梯度 loss.backward() # 反向传播,计算梯度 optimizer.step() # 更新参数

_,pred = torch.max(outputs.data,dim=1) correct = pred.eq(labels.data).cpu().sum()

print("step",i,"loss is:",loss.item(), "mini-batch correct is:",100.0 * correct / batch_size)

if not os.path.exists("models"): os.mkdir("models") torch.save(net.state_dict(),"models/{}.pth".format(epoch+1)) scheduler.step() # 更新学习率 print("lr is ",optimizer.state_dict()["param_groups"][0]["lr"])