目录

目录Python全系列 教程

3567个小节阅读:5931.2k

鸿蒙应用开发

C语言快速入门

JAVA全系列 教程

面向对象的程序设计语言

Python全系列 教程

Python3.x版本,未来主流的版本

人工智能 教程

顺势而为,AI创新未来

大厂算法 教程

算法,程序员自我提升必经之路

C++ 教程

一门通用计算机编程语言

微服务 教程

目前业界流行的框架组合

web前端全系列 教程

通向WEB技术世界的钥匙

大数据全系列 教程

站在云端操控万千数据

AIGC全能工具班

A A

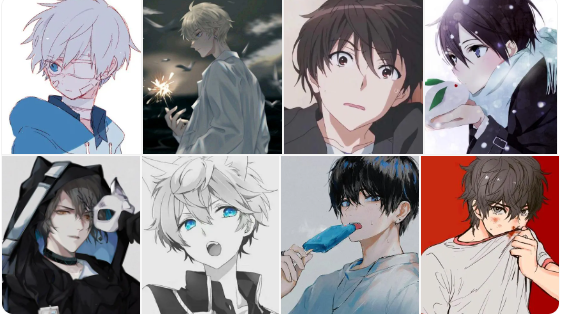

White Night

ximport torchimport torch.nn as nnimport torch.optim as optimimport torchvisionimport matplotlib.pyplot as pltimport osfrom torchvision import datasets,transforms,models

os.environ["KMP_DUPLICATE_LIB_OK"]="TRUE"

# 设置预处理方式data_transform = transforms.Compose([ transforms.RandomHorizontalFlip(), # 对训练样本进行随机水平翻转 transforms.ToTensor(), # 转化成Tensor transforms.Normalize((0.5,),(0.5,)) # 对每个通道按照指定均值和标准差进行归一化])

# 加载数据集("井")trainset = datasets.ImageFolder(root="faces",transform=data_transform)

# 数据加载器("桶")trainloader = torch.utils.data.DataLoader(trainset,batch_size=5, shuffle=True)

xxxxxxxxxx# 图片保存、显示def imshow(inputs,picname): inputs = inputs / 2 + 0.5 inputs = inputs.numpy().transpose((1, 2, 0)) plt.imshow(inputs)

plt.savefig(os.path.join('faces', '0',picname+".jpg")) # 保存图片 plt.show() plt.pause(0.01)

inputs,__ = next(iter(trainloader))

imshow(torchvision.utils.make_grid(inputs),"RealDataSample")

xxxxxxxxxx

# 定义鉴别器class D(nn.Module): def __init__(self,nc,ndf): super(D,self).__init__() self.layer1 = nn.Sequential(nn.Conv2d(in_channels=nc,out_channels=ndf, kernel_size=4,stride=2,padding=1), nn.BatchNorm2d(num_features=ndf), # 按每一个channle来做归一化 nn.LeakyReLU(0.2,inplace=True)) self.layer2 = nn.Sequential(nn.Conv2d(in_channels=ndf,out_channels=ndf*2, kernel_size=4,stride=2,padding=1), nn.BatchNorm2d(num_features=ndf*2), # 按每一个channle来做归一化 nn.LeakyReLU(0.2,inplace=True)) self.layer3 = nn.Sequential(nn.Conv2d(in_channels=ndf*2,out_channels=ndf*4, kernel_size=4,stride=2,padding=1), nn.BatchNorm2d(num_features=ndf*4), # 按每一个channle来做归一化 nn.LeakyReLU(0.2,inplace=True)) self.layer4 = nn.Sequential(nn.Conv2d(in_channels=ndf*4,out_channels=ndf*8, kernel_size=4,stride=2,padding=1), nn.BatchNorm2d(num_features=ndf*8), # 按每一个channle来做归一化 nn.LeakyReLU(0.2,inplace=True)) self.fc = nn.Sequential(nn.Linear(256*6*6,1),nn.Sigmoid()) def forward(self,x): temp1 = self.layer1(x) temp2 = self.layer2(temp1) temp3 = self.layer3(temp2) temp4 = self.layer4(temp3) temp5 = temp4.view(-1,256*6*6) out = self.fc(temp5).reshape(-1) return out

xxxxxxxxxx

# 定义生成器class G(nn.Module): def __init__(self,nc,ngf,nz,feature_size): super(G,self).__init__() self.prj = nn.Linear(in_features=feature_size,out_features=nz*6*6) self.layer1 = nn.Sequential(nn.ConvTranspose2d(in_channels=nz, out_channels=ngf*4, kernel_size=4,stride=2, padding=1), nn.BatchNorm2d(num_features=ngf*4), nn.ReLU()) self.layer2 = nn.Sequential(nn.ConvTranspose2d(in_channels=ngf*4, out_channels=ngf*2, kernel_size=4,stride=2, padding=1), nn.BatchNorm2d(num_features=ngf*2), nn.ReLU()) self.layer3 = nn.Sequential(nn.ConvTranspose2d(in_channels=ngf*2, out_channels=ngf, kernel_size=4,stride=2, padding=1), nn.BatchNorm2d(num_features=ngf), nn.ReLU()) self.layer4 = nn.Sequential(nn.ConvTranspose2d(in_channels=ngf, out_channels=nc, kernel_size=4,stride=2, padding=1), nn.BatchNorm2d(num_features=nc), nn.Tanh()) def forward(self,x): temp1 = self.prj(x) # x.size() = (5,100) #print("temp1.size()=",temp1.size()) # (5,1024*6*6) temp2 = temp1.view(-1,1024,6,6) #print("temp2.size()=",temp2.size()) # (5,1024,6,6) temp3 = self.layer1(temp2) #print("temp3.size()=",temp3.size()) # (5,512,12,12) temp4 = self.layer2(temp3) temp5 = self.layer3(temp4) out = self.layer4(temp5) return out

xxxxxxxxxxd = D(3,32) # 创建鉴别器对象

g = G(3,128,1024,100) # 创建生成器对象

criterion = nn.BCELoss() # 二分类交叉熵损失函数d_optimizer = torch.optim.Adam(d.parameters(),lr=0.0003) # 鉴别器的优化器对象g_optimizer = torch.optim.Adam(g.parameters(),lr=0.0003) # 生成器的优化器对象

# 训练函数def train(d,g,criterion,d_optimizer,g_optimizer, epochs=1,show_every=100,print_every=10): iter_count = 0 for epoch in range(epochs): # 外层循环“轮” for inputs,_ in trainloader: # 每批抽5张图 real_inputs = inputs # 真图样本的特征 fake_inputs = g(torch.randn(5,100)) # 生成器生成假图 real_labels = torch.ones(real_inputs.size(0)) # 真图标签记为1 fake_labels = torch.zeros(fake_inputs.size(0)) # 假图标记为0 real_outputs = d(real_inputs) # 使用鉴别器对真图进行鉴别,每张图鉴别结果为0-1之间的数 d_loss_real = criterion(real_outputs,real_labels) # 鉴别器对真图的损失值 real_scores = real_outputs fake_outputs = d(fake_inputs) # 使用鉴别器对假图进行鉴别,每张图鉴别结果为0-1之间的数 d_loss_fake = criterion(fake_outputs,fake_labels) # 鉴别器对假图的损失值 fake_scores = fake_outputs d_loss = d_loss_real + d_loss_fake # 鉴别器的总的损失值 d_optimizer.zero_grad() # 清空上一次的梯度 d_loss.backward() # 反向传播,计算梯度 d_optimizer.step() # 更新鉴别器参数 fake_inputs = g(torch.randn(5,100)) # 生成器生成假图 fake_outputs = d(fake_inputs) g_loss = criterion(fake_outputs,real_labels) g_optimizer.zero_grad() # 清空上一次的梯度 g_loss.backward() # 反向传播,计算梯度 g_optimizer.step() # 更新生成器参数 if (iter_count % show_every == 0): print('Epoch:{},Iter: {}, D: {:.4}, G:{:.4}'.format(epoch,iter_count, d_loss.item(), g_loss.item())) picname = "Epoch_"+str(epoch)+"Iter_"+str(iter_count) imshow(torchvision.utils.make_grid(fake_inputs.data),picname)

if (iter_count%print_every == 0): print('Epoch:{},Iter: {}, D: {:.4}, G:{:.4}'.format(epoch,iter_count, d_loss.item(), g_loss.item())) iter_count += 1 print("Finish Training,OK!")

train(d,g,criterion,d_optimizer,g_optimizer,epochs=1)